Deploying 100% bug-free code is the Holy Grail of software development. Everyone wants it, but sometimes it seems like an impossible quest. This is increasingly true if you happen to be working on a large project that contains a significant amount of legacy code. We’ve all been there: Sitting at our desks, sweating bullets while pushing the deploy button and praying that none of those lovely green servers on the load balancer dashboard turn red. We develop processes like regression testing in an attempt to avoid those dangerous deploys, but bugs still slip through the cracks. What’s to be done?

It’s not just you. Delivering faulty software on a regular basis is a problem that plagues the industry. Sometimes, it feels like we’re trying to hide our own failures behind the Captain America shield of “agile” development. Bugs are part of the process, but don’t worry because we’re iterating quickly! I challenge myself not to think like that. Just because we’re able to deploy 20 times a day doesn’t excuse us from the responsibility of getting it right the first time.

Playing loose and fast like this doesn’t fly in other industries. Surgeons can’t make mistakes until they get it right. Architects can’t implement a flawed building blueprint and then correct it later. Before I started writing code for a living, I worked in an industry that has a very low tolerance for mistakes: Law enforcement. Getting it wrong the first time as a police officer can have some pretty serious repercussions on someone’s life or liberty.

That’s not to say that surgeons, architects, and cops always get it right. Watch the news on any given day and you’re sure to see the ramifications of screwing up something serious. However, those other industries still have an error rate (and a fault tolerance) that is far lower than your average software development shop. So, why shouldn’t we as programmers hold ourselves to an equally high standard? Our work may not mean the difference between life and death on a daily basis, but our mistakes could result in tens of thousands of dollars (or more) worth of economic damage to our employers. Depending on your business, that might indirectly affect more lives than you think. Let’s do it right the first time.

When I worked in law enforcement, I was a criminal investigator primarily tasked with pursuing fire and arson-based crimes. I’ve spent quite a bit of time recently thinking about techniques and practices that I used as an investigator to minimize my risk of making mistakes in all aspects of my work. Minimizing risk is a way of life for a police officer. I want to apply that mindset to my work as a developer, and I also want to encourage it among my team members.

So, without further ado, here are eight techniques for raising the bar on software deliverables, from a criminal investigation perspective.

1. Maintaining Situational Awareness (Reading Code)

In law enforcement, they say that your head is “on a swivel”. Be aware of your environment. Always know what’s around you. When entering a room, your first inclination is to note the locations of all the exits. When sitting down, put your back against a corner and face the entrance so that you have line of sight on everyone who comes in. Always watch the hands of people you approach on the street. Take note of identifying details in case you need to describe someone later.

In software development terms, maintain awareness of your surroundings by reading code. Read the code that other members of your team write. Read the third party library code in your application. Before charging ahead to implement a new feature, read the code that might be affected and understand the implications of the needed changes. In many cases, you may spend more time reading code than writing it. That’s a good thing.

2. Interviewing Witnesses (Talking to Stakeholders)

Witnesses are always one of the most valuable sources of information when conducting a criminal investigation. The problem is that most people typically aren’t very observant. Getting good information from a witness is something of a painful extraction process that requires asking very specific questions in order to exercise their memories. Part of this process involves honing your ability to “read” people. Be observant of the subtle physiological reactions that your questions elicit, and practice associating those reactions with the emotions they represent.

Effectively communicating with your stakeholders is one of the most important parts of taking a software project from start to finish. All of those same communication skills are directly applicable. Asking specific, pointed questions reassures the stakeholder that you’re both talking about the exact same thing. Being cognizant of physiological responses will help you recognize when the other person doesn’t really understand, even though they might say otherwise. That’s your signal to re-frame the explanation, probably using less technical jargon. Not everyone speaks Tech, and developers are notorious for finding the most complicated way to explain simple concepts. It’s a natural reaction for people to “fake it until they make it” even if they don’t truly understand what you’re saying. That can spell disaster when the subject at hand is project requirements.

3. Examining the Scene (Testing)

Talking to witnesses yields subjective observations, but physical evidence doesn’t forget and can’t lie. A thorough scene examination is the only way to get the objective information that you need as an investigator to draw conclusions based on fact. Conduct your examination by applying methodologies that are widely accepted, procedural, and repeatable because you will be called upon to justify them in court, under oath. Courts will not qualify expert witnesses who’s methods can’t stand up under rigorous vetting.

The “procedural” part refers to the development process, not the programming language. Some people swear by Test or Behavior Driven Development. I generally practice TDD, but that doesn’t mean it’s my exclusive mantra. Maybe you’re one of those folks who believe TDD is dead. The particular school of thought doesn’t matter as long as you have some sort of process that involves testing. Most like-minded developers will probably listen as long as you can justify your methods. The non-negotiable part is that there should be tests, regardless of when they were written. Those tests will be the record of truth for future development. They are proof that the proper specification was implemented, regardless of methodology.

4. Consulting Your Partner (Quality Assurance)

Most investigators in agencies with enough personnel work in pairs. The reasoning is simple: Two pairs of eyes are better than one. A partner is an investigatory assistant, a sounding board for crazy theories, and a friend to watch your back all rolled into one. Working in pairs is safer and more productive than going it alone. The very presence of another person means any potential mistakes have to make it through an additional layer of protection.

Quality assurance can mean several different things depending on the work environment. Larger organizations may have a dedicated QA team. In small shops, it may be just another developer. If you’re a freelancer, QA might be you taking a fresh look after a coffee break. It’s preferable to have someone who was not involved in development QA your work, but that’s not always realistic. Even when you can hand your work to someone else, you should still be manually testing it beforehand. The existence of a QA department is not your excuse to pawn basic functionality testing off on someone else. That means not only testing the features you worked on directly, but any related systems that may have been affected as a result of your changes. There is never a reason for delivering code that hasn’t been run from a user’s perspective.

5. Preparing Charges (Documentation)

The act of sitting down and writing the report is both mandatory and exceedingly useful for the investigator. It forces the organization and presentation of thoughts. This often has the beneficial side-effect of raising new questions and revealing previously unconsidered connecting details. Furthermore, it’s an opportunity to tell the detailed story about how your conclusions were reached using sound investigatory methods. It will be the record on file that will represent you and your work in front of a judge and jury. It could be the deciding factor in someone’s guilt or innocence. Details matter. Professionalism matters. Judges don’t like spelling mistakes.

Documentation is more than just a collection of README files. It’s any written attempt to communicate the intent of your code to an audience. That could mean Github issue responses, JIRA comments, commit messages, or any number of things. Clarity of detail and the presentation of professionalism are just as important as they are in the investigator’s written report. Documentation for a new feature should explain how the deliverable meets the original requirements. If it’s a bug, describe the process used to diagnose and repair the problem in such a way that a reader could duplicate your actions. Consider your audience and write at a technical level that is appropriate for the reader. The goal is not to impress everyone with the depths of your knowledge, but rather to communicate well enough that the reader doesn’t need to ask any follow up questions.

6. Obtaining a Warrant (Code Review)

Writing an arrest warrant is a detailed, often frustrating process. It’s a request to take away someone’s freedom. Not only must you carefully, laboriously lay out the facts of your case and the conclusions that you drew as a result, but you must do so using a very specific presentation style and format. Getting a warrant signed means bringing it in person to a judge at the courthouse. The judge reviews your application and, if he or she approves, has you swear an oath in their presence. This means that every mistake or forgotten detail in the warrant results in one more round trip to the office and back. That’s serious motivation to get it right the first time.

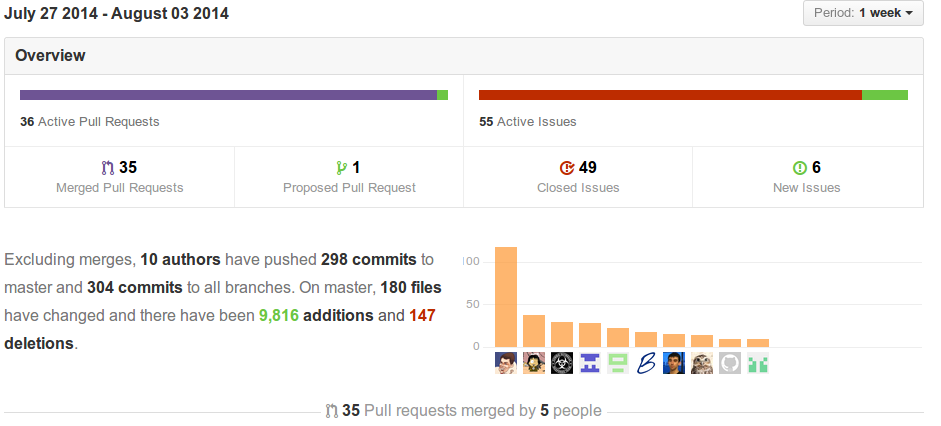

Code review is asking for a warrant that, once signed, will allow you to deploy. Developers don’t have to raise their right hands and swear an oath before receiving approval, but the vetting process should still be equally rigorous depending on the scope of change. The review may be a semi-formalized process depending on the organization, or it could be as simple as pinging a friend and asking them to review a pull request. The mechanics are not important as long as it means getting your code in front of someone else. The best reviewer is another developer who wasn’t involved in writing the code. A fresh perspective will often lead to architectural and functional improvements.

7. Making the Arrest (Deploying)

Making an arrest requires careful planning and coordination with an overall goal of controlling the environment where the arrest will be made. The best way to accomplish this is to maintain the element of surprise. Learn the target’s routine and choose a time and place where you will have a tactical (and preferably numerical) advantage. It is difficult and dangerous to make an arrest in a place that you are unfamiliar with, such as the suspect’s house. Regardless of location, maintain a heightened level of awareness and anticipation until the suspect is in jail and you are back at home or in the office. A good arrest is a well executed plan where conflict is kept to a minimum. When successful, it represents the culmination of days, weeks, or perhaps months of work.

A successful deployment is the reward at the end of the development cycle, but it too requires careful planning and coordination. Maintaining a tactical advantage means picking the right deployment strategy. End users should remain blissfully unaware of any update roll outs or restarting services. If there must be some kind of interruption, keep it as minimal as possible and choose a time that is convenient for the majority of users. Additionally, releasing code into the wild does not mean the deploy is done. There is a Danger Zone immediately following a release which may last anywhere from a few minutes to a few hours depending on the application and scope of change. Maintain a heightened sense of awareness during this time by using all available monitoring tools. Indications that something is wrong with new code may be buried in the middle of a long stack trace for something seemingly unrelated. If you work in an organization where someone else is deploying your code, the responsibility for knowing when it’s happening and subsequently monitoring the roll out still rests with you.

8. Continuing Education (Retrospective)

An investigator’s learning is never complete. There are minimum levels of government-mandated training that cover a wide array of concepts from firearms to law, but good investigators go above and beyond by seeking out sources of knowledge for staying at the forefront their field. This includes looking critically at past cases for areas of possible improvement. The most useful resource in a group of investigators is their collective past experience.

Developers don’t have mandated continuing education, so it’s each person’s individual responsibility to continue honing their craft. Reading blog posts and tech news, listening to podcasts, learning new languages and frameworks, and experimenting with side projects are all forms of continuing education. When it comes to specific projects, retrospectives are a great way to examine both the positives and negatives of the development cycle after delivering a particular feature or product. Some organizations have a formal retrospective process, but as an individual there’s nothing wrong with taking a moment after finishing a deliverable to reflect on the experience. Recognize what went well and what didn’t. Come up with ideas for how to perpetuate the former while correcting the latter.

Conclusion

This ended up being a bit long winded, but it turned out to be a useful exercise in organizing my thoughts around a topic that was floating in my head for a while. I’ve had varying degrees of success in applying these principles to my own work, but I will continue trying. We all want to deliver bug-free code, and the very act of recognizing that we can improve is a step in the right direction.

If you enjoyed this post, please consider subscribing.

]]>